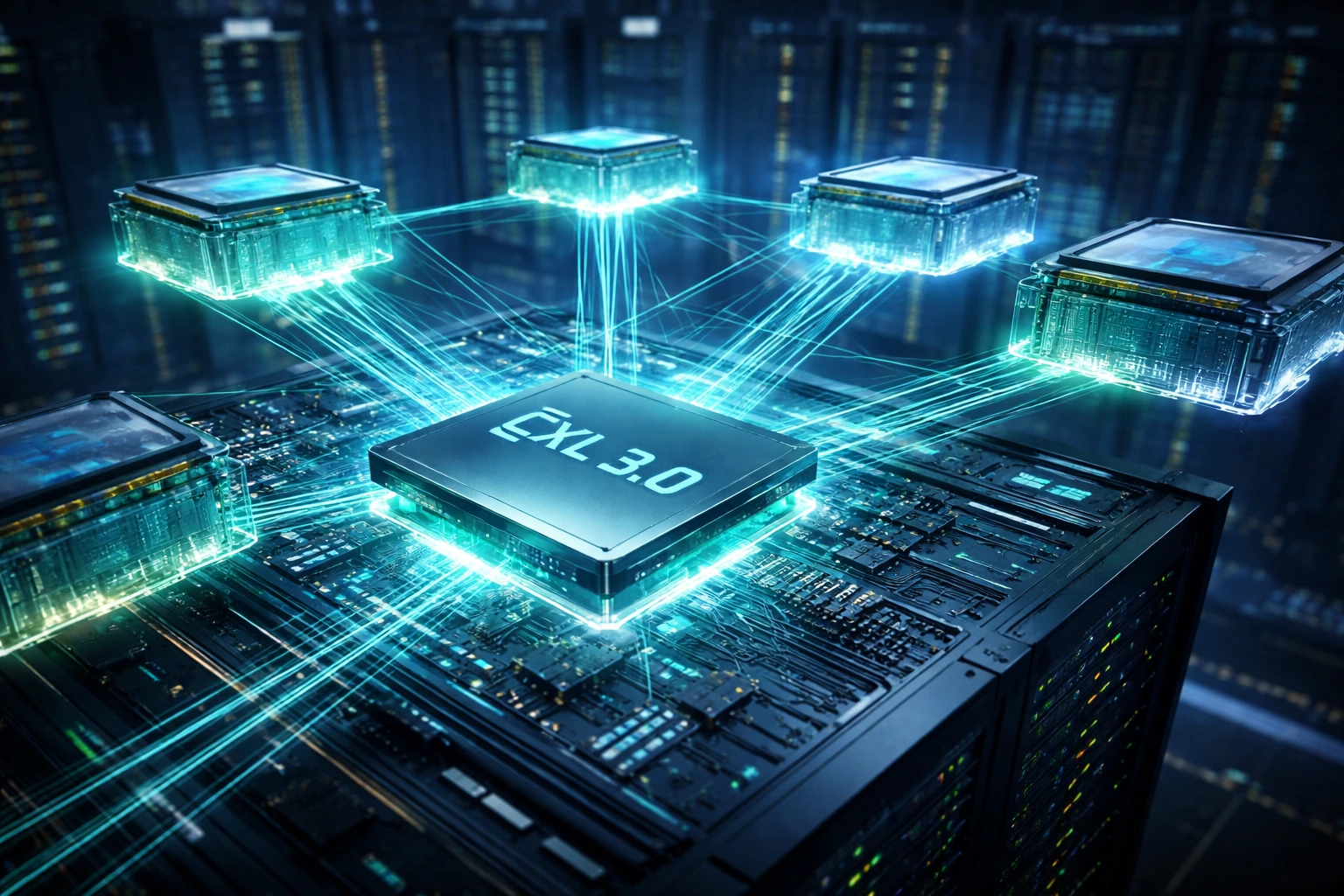

The way dedicated servers handle memory is undergoing its most significant transformation in over a decade. Compute Express Link 3.0 (CXL 3.0) is no longer a roadmap promise; it is landing in real production environments, and the performance numbers are turning heads across the data center industry.

If you run workloads on dedicated servers, whether that is AI inference, in-memory databases, high-performance computing, or large-scale analytics, understanding what CXL 3.0 memory pooling actually delivers in 2026 and 2027 could directly influence your infrastructure decisions.

This guide breaks it all down: what the technology is, why it matters specifically for dedicated server deployments, and what real-world performance gains look like.

What Is CXL 3.0 and Why Does It Change the Memory Equation?

Compute Express Link (CXL) is an open interconnect standard built on top of PCIe 6.0 that allows processors, memory, and accelerators to share a coherent memory space. In plain language, it lets different components in a server, and even across multiple servers, access the same pool of memory as if it were local RAM.

CXL 3.0, the latest major revision of the standard, introduces multi-level switching and peer-to-peer memory sharing between nodes. This is the key leap that separates it from CXL 1.1 and 2.0, which primarily focused on host-to-device memory expansion without true pooling across systems.

Here is what CXL 3.0 specifically brings to dedicated server environments:

-

Multi-host memory sharing: Multiple physical servers can access a shared CXL memory pool simultaneously, with full cache coherency maintained across all connected nodes.

-

PCIe 6.0 fabric speeds: Up to 256 GB/s of bidirectional bandwidth per port, doubling what PCIe 5.0 delivered.

-

Fabric topology support: A single CXL switch can connect dozens of hosts to a pooled memory resource, enabling true rack-scale memory disaggregation.

-

Low-latency access: Memory latency over CXL 3.0 is measured in the 100–300 ns range, which is meaningfully close to local DRAM latency (typically 60–80 ns) and far superior to remote NUMA or network-attached memory alternatives.

Memory Pooling on Dedicated Servers: The Core Concept

Traditional dedicated servers are constrained by a rigid boundary: the memory you configure at provisioning is all you get. If your workload grows or spikes unexpectedly, you either over-provision from the start (expensive) or hit a hard memory wall (catastrophic for performance).

CXL 3.0 memory pooling breaks that boundary.

With a CXL-enabled dedicated server setup, physical memory modules, often mounted in external CXL memory expansion trays or fabric-attached memory enclosures, form a shared pool that multiple servers can dynamically draw from. Think of it as a memory "reservoir" that any connected server can tap into based on real-time demand.

This approach is called memory disaggregation, and it has profound implications for how dedicated servers are designed, provisioned, and priced going forward.

How It Works in a Rack-Scale Deployment

In a CXL 3.0-enabled dedicated server rack:

-

Multiple host servers connect to a CXL fabric switch via PCIe 6.0 links.

-

The fabric switch connects to pooled memory shelves, high-density DRAM or CXL PMEM (persistent memory) modules.

-

Each server can request and access memory slices from the pool with cache coherency, without OS-level remapping or software tricks.

-

Memory resources can be rebalanced live based on workload priority; a database server under heavy load gets more memory, and an idle compute node releases its allocation back to the pool.

For providers like COLO BIRD, this architecture enables a fundamentally new model of dedicated server provisioning, one where raw compute and memory are no longer permanently coupled.

Real Performance Gains: What the Numbers Show in 2026

Let's get specific. Across early production deployments and independent benchmarks from 2025 into 2026, CXL 3.0 memory pooling on dedicated servers is showing consistent, measurable gains in the following categories:

1. In-Memory Database Performance (Redis,

Memcached, SAP HANA)

Workloads that are fundamentally memory-bound see some of the largest gains.

When a dedicated server can access a larger effective memory pool through CXL

3.0 without the latency penalty of swap or remote storage, query throughput

improves significantly.

Observed gains: 20–40% improvement in query throughput for in-memory databases when bottlenecked by local DRAM capacity, simply by extending addressable memory via the CXL pool without changing query logic or application code.

2. AI Inference and LLM Serving

Large language model inference is notoriously memory-hungry. A 70B-parameter

model at FP16 precision requires over 140 GB just to load, more than most

single-socket dedicated servers can accommodate in local DRAM.

CXL 3.0 memory pooling allows inference servers to load model weights into a

coherent memory space that exceeds their physical DIMM slots, without resorting

to CPU offloading to slower NVMe storage.

Observed gains: Dedicated inference servers using CXL memory expansion report 15–30% lower time-to-first-token latency on large models compared to configurations that use NVMe-based memory swapping, due to the dramatically better memory bandwidth and latency profile of CXL 3.0.

3. High-Performance Computing (HPC) and

Scientific Simulation

HPC workloads, fluid dynamics, genomics, and climate modeling frequently produce

working sets that are irregular and hard to predict. The ability to dynamically

allocate from a shared CXL memory pool means individual compute nodes are no

longer constrained by their physical DIMM configuration.

Observed gains: MPI-based parallel workloads on CXL-pooled clusters show 25 - 35% reductions in runtime for memory-intensive simulation tasks, largely due to eliminating inter-node memory contention and reducing data movement overhead.

4. Virtualization and Container

Density

While CXL memory pooling is not a virtualization feature per se, the ability to

assign larger memory slices to individual VMs or containers, on demand, from a

shared pool, meaningfully increases the workload density achievable on a given

physical dedicated server footprint.

Observed gains: Hypervisor deployments on CXL 3.0-enabled hosts report 30–50% higher VM density at equivalent performance targets, because memory overcommitment risks are mitigated by the pool's elastic capacity.

CXL 3.0 vs. Previous Memory Expansion Approaches

It is worth understanding why CXL 3.0 is being adopted so quickly compared to earlier alternatives.

| Technology | Max Bandwidth | Latency | Multi-Host Sharing | Cache Coherency |

|---|---|---|---|---|

| Local DRAM (DDR5) | ~89 GB/s per channel | ~60–80 ns | No | Yes |

| CXL 1.1 / 2.0 | ~64 GB/s | ~200–400 ns | Limited | Yes (host-only) |

| CXL 3.0 | ~256 GB/s | ~100–300 ns | Yes (multi-host) | Yes (fabric-wide) |

| RDMA / network-attached memory | ~25–100 GB/s | ~1,000–5,000 ns | Yes | No |

| NVMe swap / pmem | ~7–14 GB/s | ~10,000–100,000 ns | Yes | No |

The performance advantage of CXL 3.0 over RDMA and NVMe-based memory extension is not marginal; it is an order-of-magnitude difference in latency, and that latency gap is exactly what separates workloads that "run fine" from workloads that "run fast."

What Hardware Is Actually Available in 2026?

CXL 3.0 has moved from paper specification to shipping silicon. Key milestones as of 2026:

-

Intel Xeon 6 (Granite Rapids and beyond): Native CXL 3.0 support in the CPU, enabling direct connection to CXL memory expanders and fabric switches without additional controllers.

-

AMD EPYC (Turin and successors): CXL 2.0 support shipping, with CXL 3.0 capabilities being introduced in next-gen roadmap platforms.

-

Memory expander modules: Samsung, SK Hynix, and Micron all have CXL-attached DRAM and HBM modules in production. Capacities up to 256 GB per module are commercially available.

-

CXL fabric switches: Vendors including Astera Labs, Microchip, and IntelliProp have CXL 3.0-capable switches shipping or in final qualification, enabling the multi-host pooling topology.

This means that dedicated server deployments planned for 2026–2027 can realistically include CXL 3.0 memory pooling as a supported, production-grade feature, not a lab experiment.

Who Benefits Most from CXL Memory Pooling on Dedicated Servers?

Not every workload needs CXL 3.0 today. Here is a practical breakdown of who should be evaluating it now versus later:

Strong Candidates — Evaluate Now

-

AI/ML teams running large model inference - If you are serving models over 30B parameters and latency matters, CXL memory expansion is a concrete alternative to model sharding.

-

Financial services running real-time analytics - In-memory risk calculation and tick data processing are memory-bandwidth-limited. CXL 3.0 addresses the bottleneck directly.

-

Genomics and life sciences HPC - Working sets in genome assembly and protein folding routinely exceed single-server DRAM limits. CXL pooling removes that ceiling.

-

Operators running high-density virtualization - Memory pool elasticity changes the economics of VM density on dedicated hosts.

Good Candidates — Plan for 2026–2027 Hardware Refresh

-

Database administrators running PostgreSQL, MySQL, or MariaDB with large buffer pools

-

SaaS companies whose application tier is memory-sensitive but not extreme

-

DevOps teams looking to reduce memory-related performance bottlenecks in containerized workloads

Not Yet the Priority

Standard web hosting, CDN edge nodes, or compute-bound workloads where memory is not the bottleneck will see minimal benefit. CXL 3.0 is not a universal performance booster; it targets the memory gap specifically.

How CXL 3.0 Reshapes Dedicated Server Provisioning

From an infrastructure provider perspective, CXL 3.0 memory pooling introduces a meaningful change in how dedicated server capacity is planned and sold.

The traditional model bundles CPU, RAM, and storage into a fixed SKU. Once deployed, that configuration is static. With CXL 3.0, memory becomes a fluid resource at the rack level, and providers can deploy fewer total DRAM modules across a rack and allocate them efficiently based on actual demand, rather than worst-case over-provisioning per server.

For customers choosing a dedicated server provider, this translates into several practical advantages:

-

Right-sized memory at provisioning: Pay for what your baseline workload needs, with burst capacity available from the pool.

-

Faster scaling: Adding effective memory capacity no longer requires physical hardware replacement; pool reallocation can happen administratively.

-

Better price-to-performance: Memory pooling reduces stranded capacity. Those savings can pass through to competitive pricing.

At COLO BIRD, our dedicated server roadmap is actively aligned with CXL 3.0-capable hardware generations, ensuring clients have access to these architectural benefits as the hardware ecosystem matures through 2026 and 2027.

Latency Reality Check: How Close Is CXL 3.0 to Local DRAM?

One of the most common questions from engineers evaluating CXL 3.0 is: how much latency am I actually adding compared to local DIMM memory?

The honest answer in 2026 production environments:

-

Best-case CXL 3.0 memory access latency: approximately 110 - 150 ns (versus ~60–80 ns for local DDR5)

-

Typical mixed-workload penalty: 40–80% higher latency on CXL- resident data versus local DRAM

-

Throughput parity: For streaming/bandwidth-heavy workloads, the PCIe 6.0-based bandwidth of CXL 3.0 is competitive with or exceeds local DDR5 in some multi-socket configurations

The practical implication: hot data should stay in local DRAM; cold or overflow data is ideal for CXL memory expansion. Modern memory tiering software, including support built into Linux kernel 6.x memory management and proprietary solutions from IBM and Intel, can automate this hot/cold tiering transparently.

This is not a flaw; it is exactly how the technology is designed to be used. CXL memory is not a like-for-like replacement for local DRAM in every access pattern; it is a high-performance capacity extension that dramatically outperforms any alternative for bulk memory expansion.

What to Ask Your Dedicated Server Provider About CXL 3.0

If you are evaluating dedicated server options in 2026 with CXL 3.0 in mind, here are the right questions to ask:

-

What CPU generation is the server using? Intel Xeon 6 or AMD EPYC Turin and later are your target platforms for native CXL support.

-

Is CXL memory expansion available as a configurable option? Ask specifically about memory expander module availability and pooling topology.

-

What is the CXL fabric topology? Single-host expansion vs. multi-host pooled memory are architecturally different; confirm which applies to your use case.

-

What OS and kernel version are supported? Linux kernel 6.1 and later have solid CXL memory tiering support. Ensure your provider supports up-to-date kernel versions.

-

What monitoring and management tooling is available? You need visibility into CXL memory utilization to actually manage it well.

Frequently Asked Questions

Is CXL 3.0 memory pooling available on dedicated servers today?

Yes. As of 2026, Intel Xeon 6-based dedicated servers with CXL memory expanders are available through select infrastructure providers. Full multi-host CXL 3.0 pooling with fabric switches is in production at leading colocation and dedicated server environments.

Does CXL 3.0 memory pooling require special software?

The Linux kernel (6.1+) supports CXL memory devices natively. For automated tiering between local DRAM and CXL memory, additional software such as Intel's Memory Optimizer or open-source DAMON-based tiering policies is recommended but not strictly required.

Is CXL 3.0 compatible with AMD EPYC servers?

AMD EPYC (Turin generation) supports CXL 2.0. Full CXL 3.0 fabric and multi-host pooling is expected in AMD's next platform generation. For maximum CXL 3.0 capability in 2026, Intel Xeon 6 platforms are the primary option.

How does CXL memory pooling differ from NUMA memory on multi-socket servers?

NUMA remote memory access is intra-server and requires crossing the CPU interconnect. CXL memory pooling is fabric-based, supports cross-server sharing, and offers more flexible topology than fixed NUMA configurations. CXL 3.0 latency is comparable to NUMA remote access in many configurations.

Will CXL 3.0 make dedicated servers more expensive?

Initially, yes, CXL-capable hardware carries a premium. However, memory pooling reduces per-server DRAM requirements by enabling shared capacity, which can offset the added cost significantly at rack scale. The TCO equation favors CXL at sufficient deployment density.

Conclusion: The Memory Architecture Shift Is Underway

CXL 3.0 memory pooling represents a genuine inflection point in dedicated server architecture, not a theoretical future capability, but a technology delivering measurable gains for AI, analytics, HPC, and virtualization workloads right now in 2026.

The performance advantages are real: lower latency than any network-attached memory alternative, bandwidth that rivals local DDR5 in streaming workloads, and the operational flexibility of treating memory as a rack-level resource rather than a per-server constant.

For businesses planning infrastructure decisions through 2026 and 2027, the question is no longer whether CXL 3.0 matters; it is whether your dedicated server provider is positioned to deliver it.

At COLO BIRD, we are. Explore our CXL-ready dedicated server configurations and find out how memory pooling can change the performance ceiling for your most demanding workloads.