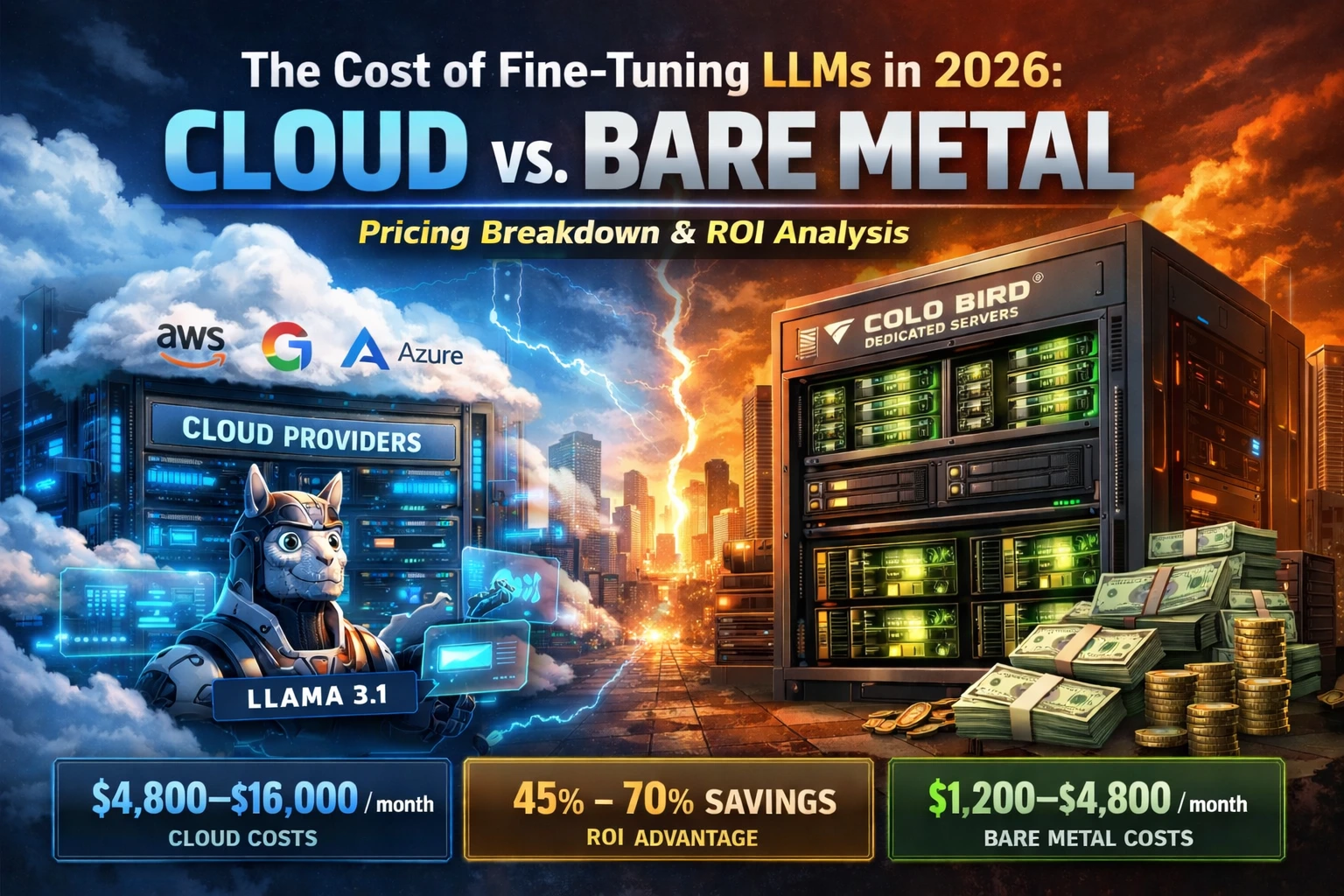

Quick Summary: LLM Training Economics in March 2026

-

The Benchmark Workload: A typical ~30-day parameter update for Llama 3.1 70B or DeepSeek-V3 utilizing parameter-efficient techniques (LoRA/QLoRA).

-

Hyperscale Cloud Cost: $4,800 – $16,000+ per month (AWS, GCP, Azure on-demand ephemeral instances).

-

COLO BIRD Dedicated Infrastructure Cost: $1,200 – $4,800 per month (Fixed-rate physical hardware).

-

The ROI Verdict: Transitioning AI workloads from virtualized cloud environments to single-tenant GPU servers can yield 45% to 70% savings, with capital preservation potentially exceeding 75% for continuous, long-term machine learning workloads.

Optimizing open-weights Large Language Models (LLMs)—such as Llama 3.1 (70B / 405B), Mistral Large 2, or DeepSeek-R1—remains one of the most computationally demanding artificial intelligence workloads of 2026.

However, as model architectures scale and training datasets expand, AI startups, research labs, and enterprise engineering teams hit a critical financial bottleneck: compute provisioning. How much capital expenditure does it actually require to train these foundational models in 2026, and what is the exact financial impact of migrating from hyperscale cloud ecosystems to dedicated GPU clusters?

In this financial breakdown, we analyze typical 2026 pricing structures from major cloud providers (AWS, GCP, Azure, CoreWeave, Lambda Labs) and contrast them directly with the hardware economics of bare-metal GPU dedicated servers from COLO BIRD.

The 2026 Hardware Matrix: VRAM and Compute Requirements

Modern machine learning workflows rarely execute highly inefficient full-parameter fine-tuning. Today's AI engineering teams heavily leverage LoRA (Low-Rank Adaptation) and QLoRA across 1 to 8 interconnected GPUs. This achieves strong model adaptation while significantly lowering the VRAM and compute threshold.

Below is a baseline VRAM and compute estimate required to optimize today's leading open-source architectures:

| Foundational Model | Parameter Scale | Optimization Methodology | VRAM Capacity Needed (Per GPU) | Estimated Training Duration* | Dataset Scope |

|---|---|---|---|---|---|

| Llama 3.1 | 70B / 405B | LoRA / QLoRA | 40–80 GB | Several days – weeks | 10k–500k examples |

| Mistral Large 2 | 123B | LoRA / Partial fine-tune | 80–120 GB | Several weeks | 50k–1M examples |

| Mixtral 8x22B | ~141B active | LoRA | 60–100 GB | Several days – weeks | 20k–300k examples |

| DeepSeek-V3 / R1 | 236B / 671B | QLoRA | 80–160 GB | Multiple weeks | 100k–2M examples |

*Note: Actual epoch duration varies significantly depending on GPU count, batch size, sequence length, and overall dataset scope.

Hyperscale Cloud Pricing: The Premium on Elasticity

Renting NVIDIA H100-class GPUs from major cloud platforms provides ultimate elasticity, but it carries a severe financial premium. Below are the typical on-demand GPU pricing ranges for virtualized compute as of 2026:

| Cloud Platform | Instance Hardware | Hourly Compute Rate (1x GPU) | 30-Day Expenditure (720 hrs) | 60-Day Expenditure | Network Notes |

|---|---|---|---|---|---|

| AWS | H100 class | $3.50 – $7.00 | $2,520 – $5,040 | $5,040 – $10,080 | p5 / next-gen instances |

| Google Cloud | A3 (H100) | $3.20 – $6.80 | $2,304 – $4,896 | $4,608 – $9,792 | A3 Mega / Ultra |

| Azure | ND H100 v5 | $4.00 – $7.50 | $2,880 – $5,400 | $5,760 – $10,800 | High availability |

| CoreWeave | H100 / B200 class | $3.00 – $5.50 | $2,160 – $3,960 | $4,320 – $7,920 | Competitive but variable |

| Lambda Labs | H100 class | $2.99 – $4.50 | $2,153 – $3,240 | $4,306 – $6,480 | Popular for startups |

| RunPod / Vast.ai | H100 / similar | $1.80 – $3.80 (spot) | $1,296 – $2,736 | $2,592 – $5,472 | Spot node pricing fluctuates |

The Cloud Reality: Sustaining a 30-day Llama 3.1 70B LoRA training run across a 4-GPU cluster will typically consume roughly $9,000 – $16,000 in cloud compute credits, depending heavily on provider and instance availability.

Bare Metal Infrastructure Economics: The COLO BIRD Advantage

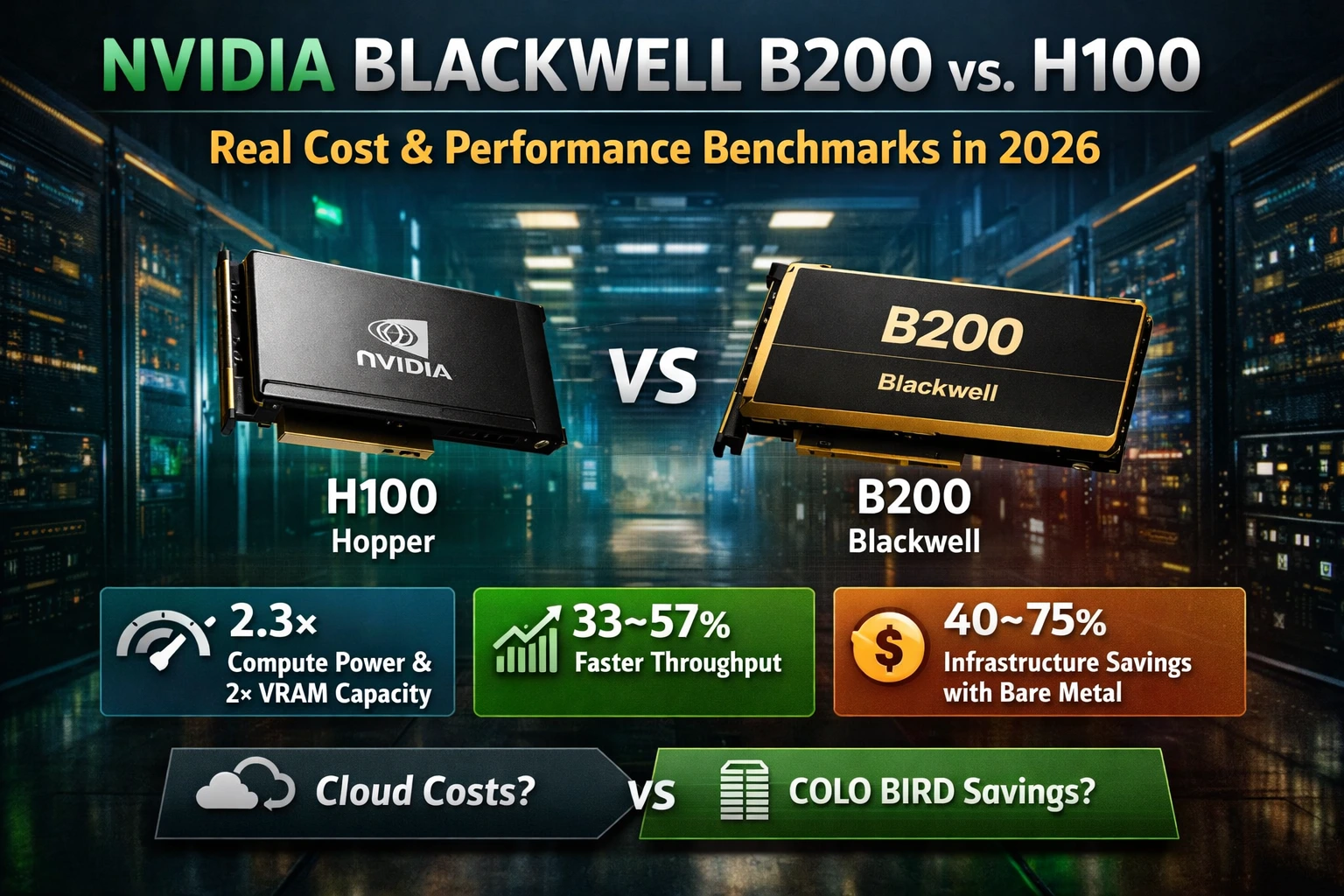

By transitioning AI workloads to fixed-rate physical infrastructure, organizations bypass virtualization overhead and secure highly predictable infrastructure operating costs. Below is an example projected pricing structure for dedicated NVIDIA Hopper (H100) and Ampere (A100) GPU servers.

| Hardware Configuration | GPU Topology | Flat Monthly Rate | Effective Hourly Rate | 30-Day Cost | 60-Day Cost | Architecture Notes |

|---|---|---|---|---|---|---|

| Entry Node | 1–2× H100 (or A100) | $1,450 – $2,600 | $2.01 – $3.61 | $1,450 – $2,600 | $2,900 – $5,200 | Single physical node |

| Mid-Range | 4× H100 | $4,800 – $7,200 | $1.67 – $2.50 | $4,800 – $7,200 | $9,600 – $14,400 | NVLink ready |

| High-Density | 8× H100 | $9,200 – $13,500 | $1.60 – $2.34 | $9,200 – $13,500 | $18,400 – $27,000 | Rack scale deployment |

| Future Blackwell | 4× B200 | ~$6,500 – $9,800 | ~$2.25 – $3.40 | — | — | Late-2026 Waitlist |

*Note: High-end GPU pricing reflects current market estimates and may vary depending on hardware availability and global deployment location.

💰 The Financial Impact (4× H100 Cluster for 30

Days)

-

Cloud Average (CoreWeave / Lambda): ~$9,000 – $16,000

-

COLO BIRD Dedicated Hardware: ~$4,800 – $7,200

-

Capital Preserved: ~45% to 70%. (On 90-day continuous machine learning workloads, savings increase further because cloud on-demand pricing remains constant while dedicated hardware costs remain fixed).

Analyzing the Break-Even Point: Cloud vs. Dedicated Compute

Determining whether to deploy ephemeral cloud instances or lease single-tenant physical servers relies strictly on monthly utilization levels. For most AI workloads, the break-even utilization threshold sits precisely between 15 and 18 days per month.

| Monthly Compute Utilization | Cloud Virtualization Cheaper? | Dedicated Hardware Cheaper? | Break-Even Point |

|---|---|---|---|

| < 10 days | Yes | No | — |

| 10–20 days | Sometimes | Often | ~12–15 days |

| 20–30 days | No | Yes | 15–18 days |

| > 30 days (Continuous) | No | Strongly | < 12 days |

💡 Infrastructure Rule of Thumb: If your engineering team executes training workloads or hosts inference APIs for more than 15–18 days per month, dedicated GPU infrastructure is mathematically the more cost-efficient architecture.

The Technical Superiority of Single-Tenant AI Servers

Financial ROI is only half the equation. When deploying bare-metal AI infrastructure, engineering teams gain deeper system-level control compared to abstracted, multi-tenant cloud environments.

-

Kernel-Level Control: Full root access enables custom CUDA stacks, specialized NVIDIA drivers, and highly optimized NCCL networking configurations without hypervisor restrictions.

-

Long-Running Training Stability: Dedicated infrastructure eliminates the risk of preemptible "spot instances" abruptly terminating long-running deep learning jobs.

-

Reduced Data Transfer Costs: Massive neural network checkpoints and multi-terabyte datasets can be transferred without the exorbitant network egress fees associated with hyperscale providers.

-

Global Data Residency Options: GPU clusters can be provisioned in strategic regional locations (Singapore, Hong Kong, Tokyo, Seoul, New York, or Amsterdam) to ensure strict data compliance and minimal latency.

-

Network-Layer Security: Public LLM inference endpoints are natively protected with enterprise-grade DDoS mitigation protocols.

Procurement Checklist: Is Bare Metal Right for Your Next Build?

-

Will this compute node be active more than 15–20 days per month? → Yes → Provision Bare Metal

-

Does your team require custom CUDA drivers or kernel-level optimization? → Yes → Provision Bare Metal

-

Are you processing sensitive or proprietary datasets requiring strict hardware isolation? → Yes → Provision Bare Metal

-

Do you need hundreds of GPUs instantly for short-term, 3-day experiments? → Yes → Cloud Elasticity is likely better

Architect Your AI Infrastructure with COLO BIRD

We provision enterprise-grade GPU dedicated servers engineered specifically for modern machine learning workloads. Configurations range from entry-level multi-GPU nodes (A100 80GB) to high-density H100 NVLink clusters, featuring rapid deployment capabilities across global data center regions.

Preparing to fine-tune massive architectures like Llama 3.1 405B, DeepSeek-R1, or Mistral Large 2? Contact our engineering team for a detailed compute estimate comparing dedicated physical hardware directly against your current cloud environment.