Search for GPU server advice right now, and you will find hundreds of blog posts confidently declaring the same thing: "Only H100 or A100 GPUs are suitable for serious AI workloads." Stack overflow threads. Hosting comparison guides. Even technical deep-dives. All are repeating the same claim.

Almost every one of them is AI-generated content, and almost every one is wrong for your specific workload.

Here is the reality of GPU selection in 2026: the right GPU for AI is not the most expensive one. It is the one that matches your model size, your workload pattern, and your traffic profile. Recommending an H100 for every AI task is like telling someone they need a racing truck to drive to the grocery store. Technically capable. Financially catastrophic.

In this guide, you will get a precise workload-to-GPU matching framework, a breakdown of what AI blogs keep getting wrong, and a transparent cost comparison showing what each tier actually costs on a GPU dedicated server from COLO BIRD versus cloud GPU per hour.

What AI-Generated Blogs Keep Getting Wrong About GPU Selection

The proliferation of AI-written hosting content has created a specific, expensive problem for engineering teams making GPU infrastructure decisions. Here is what these blogs repeat, and why each claim misleads:

What competitor blogs keep publishing (false):

-

"Only the latest NVIDIA H100 or A100 GPUs are suitable for AI training and inference."

-

"For any serious deep learning workload, you need H100s — anything less will bottleneck your model."

-

"Cloud GPU infrastructure is always better than dedicated GPU servers for AI."

-

"Consumer GPUs like the RTX 4090 are not viable for production AI workloads."

Each of these claims contains a kernel of truth stretched into a universal rule that doesn't hold. The H100 is genuinely the right hardware for one specific workload category: pre-training foundation models at scale above 70 billion parameters. For everything else, and "everything else" describes the vast majority of production AI infrastructure, there are better-matched, lower-cost options.

The core confusion is that AI writing tools mix up training, fine-tuning, and inference, three fundamentally different workloads with very different hardware requirements. GPU selection is not a prestige decision. It is a VRAM, memory bandwidth, and workload continuity problem.

The GPU Workload Matching Guide: COLO BIRD's Full Range

COLO BIRD's GPU dedicated server range spans the full NVIDIA data center lineup — from the A2 for edge inference all the way to dual-A100 configurations for large-scale model training. Here is how each tier maps to real AI workloads:

| GPU | VRAM | Best AI Task | COLO BIRD Option | Workload Fit |

|---|---|---|---|---|

| NVIDIA A10 | 24 GB GDDR6 | Inference on 7B–13B models, video transcoding, VDI | GPU Dedicated Server (A10) | Dev & small-scale inference |

| NVIDIA A40 | 48 GB GDDR6 | Production inference, RAG pipelines, generative AI, rendering | GPU Dedicated Server (A40) | SaaS AI products, multimodal |

| NVIDIA A100 80GB | 80 GB HBM2e | Large-scale training, multi-tenant MIG, 70B+ models | GPU Dedicated Server (A100) | Enterprise AI, research labs |

| NVIDIA A16 | 64 GB GDDR6 (4×16) | VDI, vGPU workloads, multiple virtual desktops | GPU Dedicated Server (A16) | Virtual desktop / VDI fleets |

| NVIDIA A2 | 16 GB GDDR6 | Edge inference, light ML tasks, developer testing | GPU Dedicated Server (A2) | Edge AI, cost-efficient dev |

| Tesla P4 | 8 GB GDDR5 | Video encoding, light inference, legacy ML | GPU Dedicated Server (P4) | Video pipelines, light inference |

The key principle: GPU selection is a VRAM-first decision. A model with N billion parameters needs approximately 2×N GB of VRAM for inference in FP16, and approximately 4×N GB for full fine-tuning. Match this number first, then consider throughput, concurrency, and budget. Most production AI applications run models in the 7B–13B range. The A10 and A40 cover this tier completely, at a fraction of the A100 or H100 cost.

Breaking Down Each AI Workload — And the Right GPU For It

LLM inference and production model

serving

This is the most common AI infrastructure workload and the one where the H100

recommendation causes the most financial damage. Production inference means your

model is actively serving user requests, generating tokens, answering queries,

running RAG pipelines, or powering a real-time AI API.

For serving 7B to 34B parameter models, which cover Llama 3.1, Mistral, Phi-3, DeepSeek V2, and most fine-tuned open-weight models, the NVIDIA A40 (48 GB GDDR6) is the highest-value dedicated GPU in 2026. It handles models at FP16 precision with room for KV cache overhead, supports TensorRT and vLLM serving frameworks natively, and delivers consistent token throughput without the HBM3 premium you pay for on the A100 or H100.

For smaller models in the 7B range serving moderate concurrency, and for teams running multiple smaller inference instances simultaneously, the NVIDIA A10 (24 GB GDDR6) is purpose-built for inference workloads at the lowest cost per token in COLO BIRD's range.

Real benchmark context: An A40 running Llama 3.1 8B via vLLM at batch size 8 delivers approximately 280–340 tokens per second at FP16, sufficient for most API-scale serving below 50 concurrent users. For higher concurrency production endpoints, dual-A40 or A100 configurations expand capacity linearly.

Full model pre-training: the one case where

A100 is truly required

Training a foundation model from scratch, any model above 30–40 billion

parameters, or anything requiring NVLink multi-GPU communication at scale, is

where the NVIDIA A100 80GB earns its position. The A100's 80 GB of HBM2e memory

handles gradient tensors, optimizer states, and activation checkpoints

simultaneously for large models. Its Multi-Instance GPU (MIG) capability, which

partitions one physical A100 into up to 7 isolated compute instances, makes it

indispensable for shared research environments.

The critical caveat AI blogs miss: pre-training foundation models from scratch describes almost no company outside frontier AI labs. If you are fine-tuning an existing model, running inference, or training on a proprietary dataset, your A100 budget almost always exceeds your actual requirement.

Fine-tuning open-weight models: LoRA, QLoRA,

and PEFT workflows

Parameter-efficient fine-tuning methods have fundamentally changed the VRAM

requirements for customising large language models. A 13B parameter model

fine-tuned with QLoRA can fit in 24–30 GB of VRAM. A 7B LoRA fine-tune runs

comfortably in under 20 GB. This makes fine-tuning on COLO BIRD's A10 (24 GB) or

A40 (48 GB) tier highly cost-effective.

Framework compatibility: All COLO BIRD GPU dedicated servers support PyTorch, TensorFlow, CUDA, and popular serving frameworks, including vLLM, Ollama, and TensorRT-LLM. Available OS options include Ubuntu 22.04/24.04, Debian 11/12, AlmaLinux, Rocky Linux, CentOS, and Windows Server 2019/2022.

Generative AI, image diffusion, and video

workloads

Stable Diffusion, Flux, image-to-video pipelines, and multimodal AI applications

occupy a distinct GPU requirement tier. The NVIDIA A40 is COLO BIRD's leading

recommendation for this workload category, combining 48 GB of memory with

fourth-generation Tensor Cores. It avoids the trade-offs of consumer GPUs while

delivering the professional data center reliability of ECC memory and 24/7

thermal stability.

Virtual desktop infrastructure (VDI) and vGPU

workloads

Enterprise teams running virtual desktop environments or remote workstations

have a distinct GPU requirement: vGPU partitioning capability. The NVIDIA A16

(four 16 GB dies sharing 64 GB total) is specifically designed for this use

case. The NVIDIA A2 serves the entry tier for edge AI inference and light

developer GPU workloads.

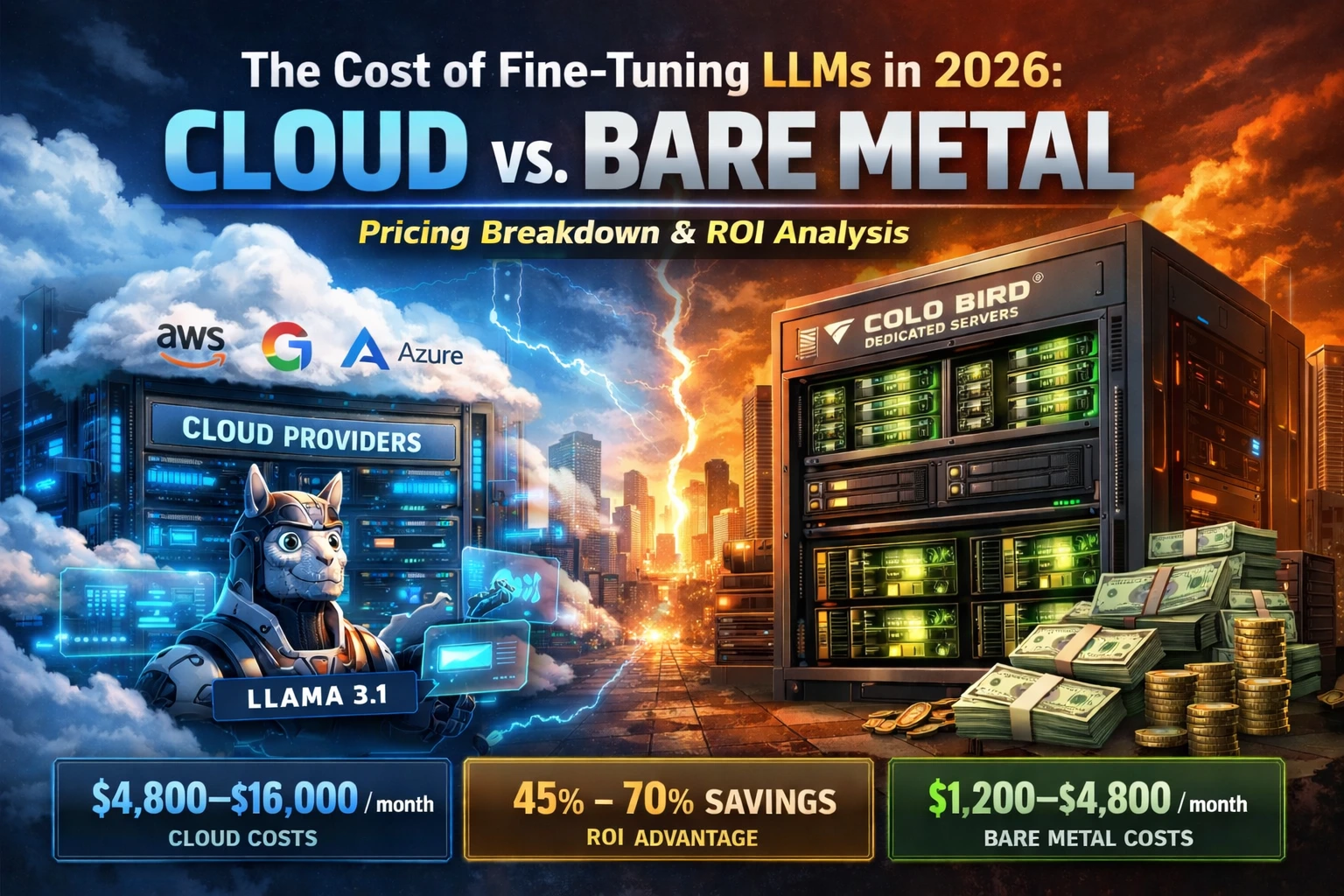

Cloud GPU vs. Dedicated GPU Server: The Real Total Cost of Ownership

The second major claim AI blogs repeat, "cloud GPU is always better for AI workloads", ignores the fundamental economics of continuous versus bursty compute. The distinction is everything:

-

Cloud GPU wins when your AI workload is bursty or experimental. Prototyping a new model architecture or running a 200-hour job once a month genuinely benefits from pay-per-hour elasticity.

-

Dedicated GPU wins when your AI workload is continuous. A production API serving requests 24/7. In these cases, cloud's per-hour billing compounds into a significant monthly premium over the fixed cost of a dedicated GPU server — while also exposing your workload to the noisy-neighbour effect.

| Scenario | Cloud GPU (pay-per-hour) | COLO BIRD Dedicated GPU |

|---|---|---|

| A100 production inference, 24/7, 1 month | ~$1,440–$2,190/mo | ~$500–$750/mo (fixed) |

| A40 inference endpoint, 24/7, 1 month | ~$915–$1,300/mo | ~$380–$600/mo (fixed) |

| A100 model serving, 1 year | ~$17,280–$26,280/yr | ~$6,000–$9,000/yr |

| 5 TB/mo data transfer (egress) | ~$450–$600 added | Included (unmetered option) |

| DDoS protection | $75–$3,000+/mo | Free (250 Gbps) |

| Noisy neighbor risk | High (shared GPU node) | None — bare metal |

| GPU performance consistency | Variable under load | Guaranteed, dedicated hardware |

Total cost comparison: A100 inference, 3-year

horizon

Cloud (24/7): ~$51,840–$78,840 over 3 years

COLO BIRD dedicated: ~$18,000–$27,000 over 3 years

Potential saving: $24,000–$52,000 per A100 server over 3 years

The noisy-neighbour problem deserves special attention for AI inference workloads. On shared cloud GPU infrastructure, another tenant running a heavy training job on the same physical node can cause your inference latency to spike unpredictably. On a COLO BIRD bare-metal GPU dedicated server, your GPU, VRAM, NVMe storage, and network bandwidth are exclusively yours. Consistent token throughput at all hours.

VRAM Quick-Reference: Match Your Model to the Right GPU

Use this table as your shortcut before making any GPU infrastructure decision. VRAM is the hard ceiling, if your model doesn't fit, nothing else matters.

| Model Size | VRAM for Inference (FP16) | VRAM for Fine-tuning | Right COLO BIRD GPU |

|---|---|---|---|

| 7B parameters | ~14 GB | ~18–24 GB (LoRA) | NVIDIA A10 (24 GB) |

| 13B parameters | ~26 GB | ~32–40 GB | NVIDIA A40 (48 GB) |

| 30B parameters | ~60 GB | ~70–80 GB | NVIDIA A40 × 2 or A100 |

| 70B parameters | ~140 GB | ~160+ GB | NVIDIA A100 × 2 |

| 70B+ pre-training | ~280+ GB (multi-GPU) | Requires NVLink cluster | NVIDIA A100 cluster |

Quantization note: INT8 quantization reduces VRAM requirements by approximately 50%. INT4 (via GGUF, AWQ, or GPTQ) reduces by approximately 75%, with some quality trade-off at the extremes. A 13B model running INT4 quantization fits comfortably in 8–10 GB of VRAM, opening up the A10 for models that would otherwise require the A40 tier.

COLO BIRD GPU Dedicated Servers: The Right Hardware for Every AI Workload

COLO BIRD's GPU dedicated server range is built on one principle: provision the GPU tier that matches the workload, not the one that generates the highest price tag.

NVIDIA A10 — inference and light fine-tuning

(24 GB GDDR6)

Ideal for: serving 7B LLMs in production, RAG API endpoints, developer

environments, and cost-conscious AI inference at scale. The A10 is COLO BIRD's

entry point into GPU dedicated hosting for AI teams running small to mid-size

language models on a budget-conscious infrastructure.

NVIDIA A40 — production inference and

generative AI (48 GB GDDR6)

Ideal for: SaaS AI products, multimodal inference, Stable Diffusion /

Flux pipelines, RAG at scale, and models between 13B and 34B parameters. The A40

is COLO BIRD's most recommended GPU tier for AI product teams in 2026.

NVIDIA A100 — large-scale training and

multi-tenant environments (80 GB HBM2e)

Ideal for: training models above 40B parameters, multi-tenant GPU pools

using MIG, research labs, and enterprise AI teams running full-precision 70B

inference. Available in single and dual-GPU configurations, deployed across 250+

global data center locations.

NVIDIA A16 and A2 — VDI, edge inference, and

developer testing

Ideal for: virtual desktop infrastructure running vGPU workloads (A16),

edge AI inference and budget-conscious developer environments (A2).

Tesla P4 and Quadro RTX 4000 — video pipelines

and specialist workloads

For video transcoding, encoding pipelines, and legacy ML inference workloads,

COLO BIRD's Tesla P4 and Quadro RTX 4000 configurations provide cost-efficient

dedicated GPU hosting.

Custom GPU server configurations

-

COLO BIRD builds and deploys custom GPU dedicated server configurations in 24–72 hours.

-

Choose your GPU tier, CPU (AMD EPYC, AMD Ryzen, Intel Xeon), RAM, NVMe storage, and network uplink.

-

Single and dual GPU options available. Multi-GPU clusters configured on request.

-

Supported OS: Ubuntu 22.04/24.04, Debian 11/12, AlmaLinux, Rocky Linux, CentOS, Windows Server 2019/2022, VMware ESXi.

-

Deploy across 250+ global data center locations — North America, Europe, Asia, APAC, South America, and Africa.

How to Choose: A Three-Question GPU Infrastructure Framework

Before committing to any GPU server configuration, answer these three questions:

1. What is the VRAM floor for my model?

Calculate using the rule above (2×N GB for inference, 4×N GB for training at

FP16). If you're using INT8 or INT4 quantization, halve or quarter that figure.

This sets your minimum GPU tier.

2. Is my workload continuous or bursty?

If your GPU is active for 16+ hours per day or running 24/7 production

inference, dedicated GPU hosting will be 40–65% cheaper than cloud GPU at

equivalent specs. If your workload runs a few hours per week, cloud's per-hour

billing may be more economical.

3. What concurrency and latency does my API

require?

Below 30 concurrent users, a single A10 or A40 handles most LLM inference

workloads. Above 50 concurrent users with strict latency SLAs, scale to dual-A40

or A100 configurations. Above 100 concurrent users with sub-100ms p99 latency

requirements, contact COLO BIRD for a multi-GPU cluster quote.

If you want us to run this calculation on your actual workload, model size, expected concurrency, and daily active hours, get in touch with COLO BIRD's team. We will give you an honest recommendation, including cases where the cloud is the right answer.

Frequently Asked Questions About GPU Dedicated Servers for AI

What is a GPU dedicated server, and how does it differ from a cloud GPU?

A GPU dedicated server gives you exclusive access to a physical server with one or more NVIDIA GPUs, no shared hardware, and no noisy-neighbour effect. Cloud GPU rents GPU capacity on shared infrastructure, billed per hour. For continuous AI workloads (24/7 inference, ongoing model serving), dedicated GPU servers are typically 40–65% cheaper.

Which GPU is best for LLM inference in 2026?

For most production LLM inference workloads (7B–34B parameter models), the NVIDIA A40 (48 GB) or A10 (24 GB) are the best-value dedicated GPUs available in 2026. The A100 80GB is appropriate for larger models (70B+) or high-concurrency endpoints above 50 concurrent requests.

Do I need an H100 for AI model training?

Only if you are pre-training a foundation model above 70 billion parameters from scratch, which describes fewer than 1% of AI projects. For fine-tuning existing models using LoRA or QLoRA, the A100 or even the A40 handles most tasks.

What VRAM do I need for my model size?

A rough rule: VRAM for inference (FP16) ≈ 2 × model parameter count in GB. VRAM for full fine-tuning ≈ 4 × model parameter count in GB. Using INT8 or INT4 quantization roughly halves or quarters these figures.

How quickly can I deploy a GPU dedicated server with COLO BIRD?

COLO BIRD builds and deploys custom GPU dedicated server configurations in 24–72 hours across 250+ global locations.

What AI frameworks are supported on COLO BIRD GPU servers?

All major AI and deep learning frameworks are supported: PyTorch, TensorFlow, CUDA, Triton Inference Server, vLLM, Ollama, TensorRT-LLM, Hugging Face Transformers, and LangChain. Colo Bird provides full root access with no software restrictions.

The Takeaway: Match the GPU to the Workload, Not the Hype

"You need H100s for AI" is this decade's version of "you need a supercomputer for data analysis", a blanket statement that ignores the actual distribution of real workloads. In 2026, the AI infrastructure landscape has matured enough to match hardware to task with precision.

For most production inference workloads, the A40 or A10 delivers everything you need at 40–60% of the A100 cost. For fine-tuning on open-weight models, LoRA and QLoRA have moved the VRAM requirement down into the A10 and A40 range for most practical tasks. For large-scale training, the A100 remains the right choice, and the H100 is only justified at the very top of the scale.

Get the matching right, and you are looking at savings of tens of thousands of dollars per year without sacrificing any performance that actually matters for your product.

Browse COLO BIRD's full GPU dedicated server range, A100, A40, A10, A16, A2, Tesla P4, and Quadro RTX 4000, with custom configurations, unmetered bandwidth options, free 250 Gbps DDoS protection, and 24–48 hour deployment across 250+ global locations.